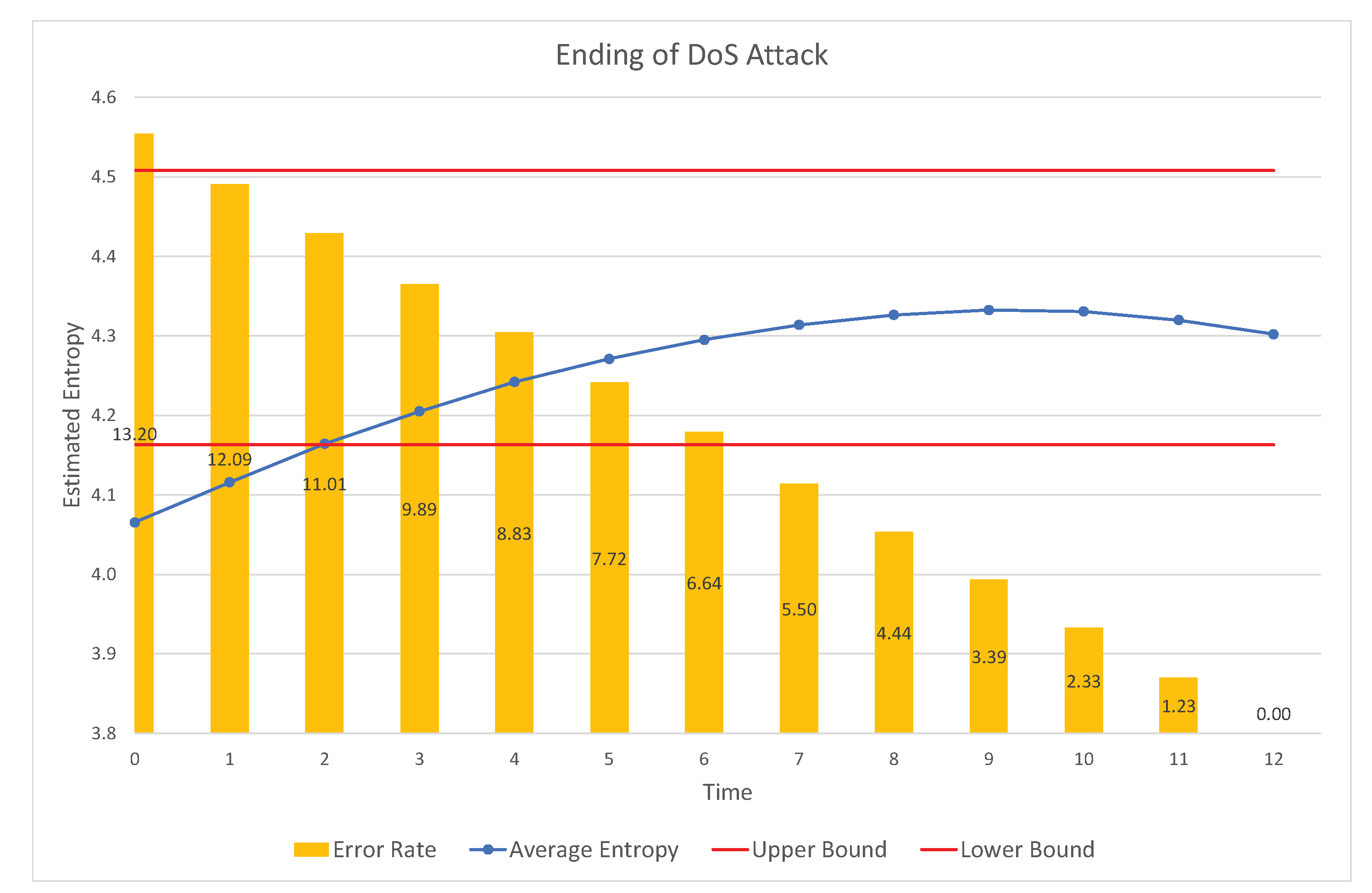

(2015), 'A test for the increasing convex order based on the cumulative residual entropy', Journal of the Korean Statistical Society 44, 491-497. (1977), 'A Gelivenko-Cantelli theorem and strong laws of large numbers for functions of order statistics', Annals of Statistics 5, 473-480. (2012), 'Dynamic cumulative residual Renyi's entropy', Statistics: A Journal of Theoretical and Applied Statistics 46(1), 41-56. (1974), 'Linear functions of order statistics with smooth weight functions', Annals of Statistics 2, 676-693. (2003), Mathematical Statistics, Springer, New York. Moreover, Shannon made of entropy the cornerstone on which he built his theory of information and communication 7. (1948), 'A mathematical theory of communication', The Bell System Technical Journal 27, 379-423. Since then, entropy has played a central role in many-particle physics, notoriously in the description of non-equilibrium processes through the second principle of thermodynamics and the principle of maximum entropy production 5,6. (2007), Stochastic Orders, Springer, New York. (1961), On measures of entropy and information, in 'Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, Volume 1: Contributions to the Theory of Statistics', Vol. In this paper, inspired by the Rényi entropy, we propose the complex-valued Rényi entropy, which measures uncertain information of the complex-valued probability under the framework of complex number, and this is also the first time to measure uncertain information in the complex space. Renyi entropy is a generalization of Shannon entropy, which plays an important role in information theory. (2004), 'Cumulative residual entropy: a new measure of information', IEEE Transactions on Information Theory 50(6), 1220-1228.

(2019), 'Some properties of cumulative Tsallis entropy of order α', Statistical papers 60(3), 583-593 (2010), 'Some new results on the cumulative residual entropy', Journal of Statistical Planning and Inference 140(1), 310-322. (2019), 'Shannon's entropy and Its Generalizations towards Statistics, Reliability and Information Science during 1948-2018', arXiv:1901.09779. (1977), A strong law of large numbers for linear combinations of order statistics, Mathematisc Centrum, Amsterdam. (2010), 'The Gini Index and Measures of Equitability', American Mathematical Monthly 57(12), 851-864. (1) For two discrete random variables X and Y, with joint density PXY, the joint Rényi entropy is. The Rényi entropy of X of order a (a > 0) is dened as Ra(X) 1 1 2a log å x PX(x)a. (2009), On cumulative entropies and lifetime estimations, in 'International Work-Conference on the Interplay Between Natural and Artificial Computation', Springer, pp. Rényi Entropy Let X be a discrete random variable taking values from a sample space fx1., xngand distributed according to PX (p1., pn). (2005), 'On testing the dilation order and HNBUE alternatives', Annals of the Institute of Statistical Mathematic 57(4), 803-815.Ĭrescenzo, A. (2005), 'Renyi's entropy for residual lifetime distribution', Statistical Papers 46(1), 17-30.īelzunce, F., Pinar, J.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed